Defining success or failure is critical to the success of every marketing strategy, and measuring success or failure is not always as straightforward as it may seem.

If sales on your eCommerce site are up 10% over the previous week, is this a success? Not necessarily. Maybe this week of the year is historically your busiest, and your sales should have been up 20% relative to the previous week.

What if sales are up 20% relative to the same week of the previous year? This does not necessarily represent a successful week either, since sales could have already been trending 30% higher year over year.

What if, instead, you could predict with greater certainty that the past week performed better than projected…45% better?

Setting Your Benchmarks

The first steps of defining, visualizing and analyzing your KPIs are challenging exercises by their own right; setting benchmarks for your KPIs is even tougher but equally as critical to ensure that stakeholders – both internal and external – are held accountable for performance.

Imagine this hypothetical: you have the perfect Marketing Measurement Model with well defined KPI’s (not metrics!) and your CMO is singing your praises. The first week goes by and it’s reporting and analysis time. All the data is collected, prepared and visualized, so now it’s time to prepare to answer the critical questions your CMO will likely ask you during tomorrow’s meeting:

- What is our benchmark for success?

- Was this week a success or failure?

- Why did we hit or miss our benchmark?

If you’re struggling to come up with these answers… predictive modeling can help!

In this post, we will:

- Define a hypothetical business use case

- Outline our proposed solution and approach

- Walkthrough the approach of forecasting a KPI

- Share ideas for other marketing use cases for forecasting

Background & Business Objective

Let’s drill down further into our hypothetical scenario and imagine that we are the marketing manager for the Google Merchandise Store. We have an amazing marketing team – that includes YOU, the marketing manager, of course! We also have an enterprise set of website data collection and storage tools that include: Google Tag Manager, Google Analytics 360, and the Google Analytics 360 BigQuery Export. Most importantly – besides our amazing team – is that we have a well defined Marketing Measurement Model. Here is one of the objectives with its related sub-elements:

| Objective | Increase awareness of Google Merchandise brand |

| Goal | Increase sales |

| KPI | Revenue |

| Segment | Paid search (cpc) |

| Target | ? |

We want to answer the question for our meeting with the CMO next week:

Was the week of Monday, July 24 – Sunday, July 30 a success or failure for our revenue goal by all users from the paid search (cpc) channel?

But how do we accurately define this success or failure?

The Solution

If it’s midnight before your meeting with the CMO, you can stop reading here and set a target for a 10% improvement (vs last year or last reporting period) as Avinash states:

“…Yes, and I’ve said this frequently, if all else fails just set your target for a 10% improvement. Anything, absolutely anything, can be improved by 10% with just a small amount of effort. But, you likely want to do something more complicated, and more sound, over time.”

But we doubt that’s your situation and we have a more complicated, sound but long-term solution:

We will forecast revenue from all users for the paid search (cpc) channel based on the previous 50 weeks of historical data.

Dataset and Tools

For the purposes of this post, we chose to use the public GA360 BigQuery dataset because it makes our example reproducible. Click here for the source code used in this forecasting analysis.

In our hypothetical example, we chose to use GA 360 BQ dataset because:

- No limits on data volume or number of dimensions we can query

- Dataset was large but straightforward to query and QA vs GA UI

- Easier to drill-down to answer questions at the user level from trends

We chose R, RStudio and the respective R packages because they are:

- Enterprise ready solutions (RStudio, Microsoft, SQL Server R, Tableau R, Facebook’s Prophet forecasting library)

- Scalable and compatible with any cloud or on premise solution by using Docker (outside the scope of this post)

- Widely supported by the open source community (Stackoverflow, GitHub, Google Developer Experts)

- Low cost for the proof of concept to our CMO since all enterprise solutions above have free, open-source versions

Our Approach

We won’t dive into the details or too much code for the purposes of this post. Instead, we’ll offer a high-level overview and focus on the business use case!

Define

Arguably the most critical, difficult phase of any predictive modeling project. Good news for us, we’ve already defined our business question to answer (you know, the one for our meeting with the CMO next week):

Was the week of Monday, July 24 – Sunday, July 30 a success or failure?

Prepare

This is typically the most time-consuming phase, but in our case, it’s not so bad. In summary, we have only 1 simple query to write to obtain the data we need from the GA 360 BigQuery public dataset:

[/et_pb_text][et_pb_toggle _builder_version=”3.0.105″ title=”Click to view code” open=”off”]

#legacySQL

SELECT

DATE(date) AS date,

(trafficSource.medium) AS medium,

ROUND(SUM(IFNULL(totals.transactionRevenue/100000,0)),2) AS transactionRevenue

FROM (TABLE_DATE_RANGE([bigquery-public-data:google_analytics_sample.ga_sessions_],

TIMESTAMP('2016-07-01'),

TIMESTAMP('2017-08-31')))

GROUP BY

date,

medium

HAVING

medium = 'cpc'

ORDER BY

date ASC,

transactionRevenue DESC

[/et_pb_toggle][et_pb_text _builder_version=”3.0.105″ background_layout=”light”]

Next, we prepare the data on our local machine using R, RStudio and the following R packages:

- bigQueryR – to download data from BigQuery to our local machine

- dplyr – to prepare data for modeling, part of the amazing tidyverse

- ggplot2 and plotly – for visualizing and exploring data

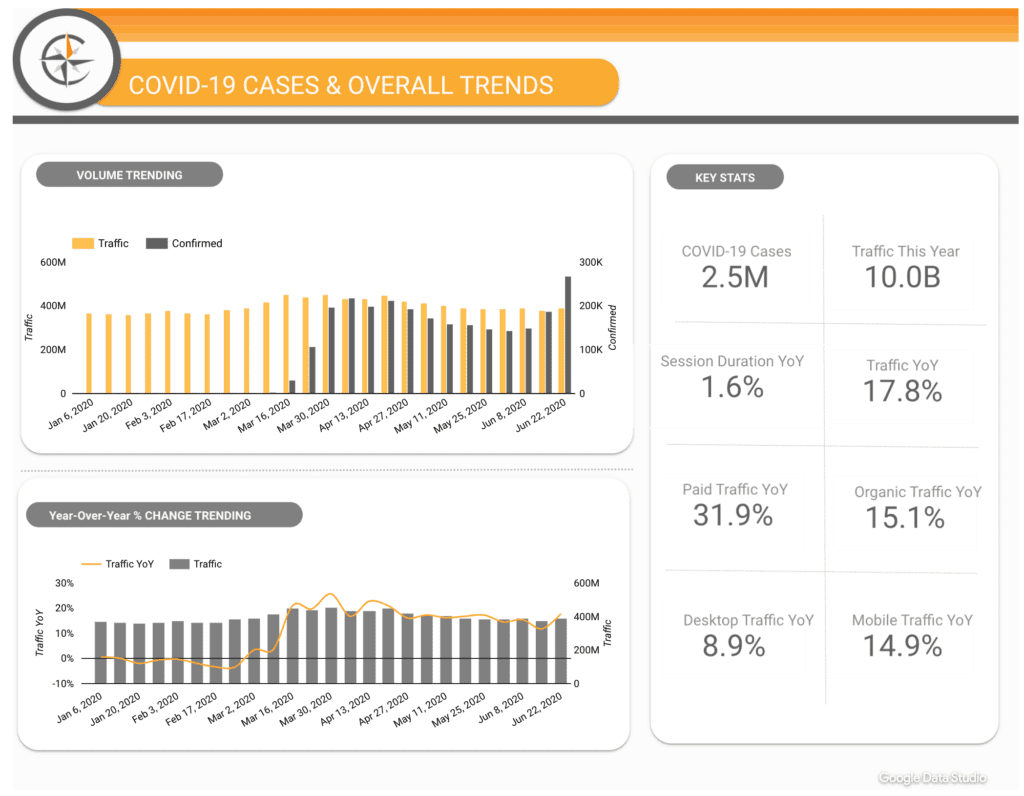

The plot in the bottom right shows:

- Black dots – the actual revenue from paid search (cpc)

- Blue shaded area – confidence intervals

- Blue line – forecasted revenue from paid search (cpc)

Understand

This is the phase where the magic happens! In a nutshell, we:

- Checked the downloaded data from our query above (e.g. – named and formatted our columns for modeling)

- Split data into training and validation data (since an observed or collected data point should not be used for hypothesis observation and confirmation)

- Used the prophet R package to execute a forecast to generate a predicted value

- Calculated the difference between forecasted and actual value

Here are the results of our forecast vs. actual for revenue from paid search (cpc) as well as a visual:

- Predicted: $11,564.94

- Actual: $16,808.60

- Difference: +45.3%

The plot shows:

- Black dots – the actual revenue from paid search (cpc)

- Blue shaded area – confidence intervals

- Blue line – forecasted revenue from paid search (cpc)

Communicate & Integrate

Since our CMO – like most exec’s – loves concise communications, we’ll communicate our results as:

- The week of Mon July 24 – Sunday July 30 was a SUCCESS!

- Our forecasted revenue was $11,564.94 and the actual revenue was $16,808.60 – a +45% difference!

After our presentation of the CMO we would likely take the following actions:

- Celebrate our win with our team’s favorite beverages and desserts! (we like wheat-grass shots and ice cream at E-Nor 🙂 )

- Dig deeper to investigate question like “why did revenue generated from paid search (cpc) exceed our forecast so greatly?”

Additionally, the details of integrating these results is beyond the scope of this post but generally speaking, we would:

- Confirm CMO’s buy-in for our approach

- Implement a process to re-run this forecast each week

- Implement a process to add forecasting values to our existing dashboards

- Create and implement a process to investigate why we hit or miss our target (e.g. – we missed our expected/predicted target of X because of Y and Z reasons)

Final thoughts

We hope you now can see at least one example of how forecasting can be used to answer the age-old question of: Was this reporting period a success or failure? and improve accountability within your marketing team.

Forecasting is a powerful methodology and fundamentally speaking, it can answer fundamental questions like:

- What happens if nothing changes?

- Did something change?

Specifically, here are some other use cases that we can apply forecasting to:

- Maximize marketing budget ROI – “Assuming we make no changes to our marketing budget allocation, what can we expect our revenue be?”

- Investigate “Why?” only when it counts – “Did KPI X change significantly?”

- Allocate resources to meet expected demand – What products, product brands or categories can we expect to be in the highest demand this summer season?

At E-Nor, we can help with the entire forecasting process – from defining the business objective, collecting and storing clean, quality data and of course, selecting and integrating the right forecasting model for the job.

Contact us to learn more about how we can help with Predictive Modeling and more!